I’ve been reading Bayesian Modeling Using WinBUGS: An introduction. It’s a really nice intro to fitting complex Bayesian models with WinBUGS (aka OpenBUGS), which is a program that can sample from the posterior distribution of a Bayesian model’s parameters using MCMC methods (the GS in BUGS stands for Gibbs sampling). MCMC methods are very general, and allow one to fit very complex hierarchical models through brute force. Now in neuroscience we often have very long data that require less computationally expensive methods than MCMC to fit, so we use constrained models that are computationally tractable, like the GLM (generalized linear model) for fitting receptive field models of neurons (see Paninski’s class notes for details). So even though MCMC methods seemed awesome I’ve never had a chance to apply them in my day-to-day research.

Until now. I was faced with a pretty tough problem. Basically we’re estimating neuronal receptive fields with random stimuli. Now between batches of reverse correlation we do a manipulation (I could tell you what, but then I’d have to kill you) and we want to see whether this modifies the receptive field’s position and size. So the idea is to fit a parametric model for the RF envelope in each time epoch (a Gaussian) and then track the parameters over time to see if they change. Easier said then done. For one thing if you fit a Gaussian to the RF through least-squares there’s no guarantee that the error surface is well-behaved, there might be multiple local minima. If you wanted to, you could do random restarts, but you would have to ensure that you are tracking the same minimum through time. Second problem is that the data is noisy and we’re looking for subtle changes so you somehow have to smooth the RF parameters if you want to get reliable estimates of them.

So the solution I came up with is to assume a generative model for the RF where at the first time epoch the RF parameters are picked at random from some distribution (see here for a different solution path). At the subsequent time epoch the parameters are now the previous parameters plus some delta picked from a distribution (first-order Markov chain dynamics), and so on and so forth. This is conceptually related to the Kalman filter and HMMs, but standard methods are useless here because of the multiple nonlinearities involved.

On the other hand, it’s surprisingly straightforward to code up this model in WinBUGS and get samples from the posterior probability of the parameters. Chapter 9 of the book is really useful here, especially the bit about tracking Kobe Bryant’s sink percentages through seasons (the WinBUGS code for this is on the website). Now with the posterior probabilities I can construct point estimates of the model parameters (the mean of the samples) AND credible intervals AND because I’m using the expected value of the posterior rather than maximum likelihood I don’t get this multiple local minima BS.

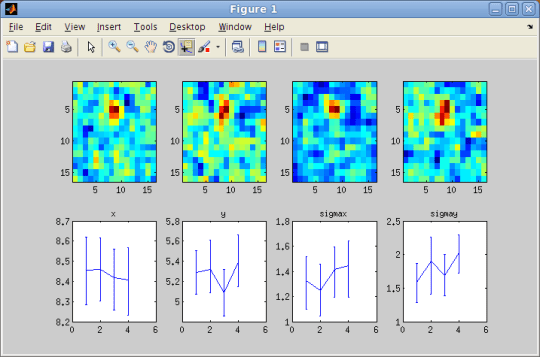

This is what you see at the top for one neuron, with the raw RFs in the 4 different epochs plotted at the top and the parameters of interest (x, y, sigma x, sigma y) with 90% credible intervals plotted at the bottom. As you can see, you get subpixel resolution this way and it doesn’t choke despite the fact that the data is pretty noisy. Pretty freaking cool.

3 responses to “Hierarchical Bayes and WinBUGS for tracking receptive fields”

[…] trains with GLMs. The same methods could potentially be used, of course, for other purposes, like tracking receptive fields. Of particular interest is a remarkably efficient Hamiltonian Monte Carlo (HMC) […]

[…] the second issue you may remember that earlier I discussed how to track receptive field envelopes through time using a hierarchical Bayesia…. The same basic idea can be used to fit a surface to a Gaussian. Simply cast the surface estimation […]

[…] to the Recommended Blogs section on the right. Some example posts: A Plexon C++ API on 64-bit Linux Using WinBUGS to track RFs Rotating a histogram in […]