VR has become affordable and accessible to consumers. Neuroscience research can benefit from the tight control of visual and auditory stimuli and the immersion that VR can afford. I compiled a list of the opportunities that cheap, commodity VR headsets bring to human neuroscience.

What can you do with VR?

Virtual navigation in VR

Negative example.

The graphics in this 2002 review are dated, but the content is still relevant for today. VR has remained in use in neuroscience, typically:

- Navigation and memory: blocked light VR can be used to simulate navigation in different environments, either on a treadmill or in large empty room. We can study people’s navigation strategies, how cognitive maps develop, or how uncontrolled changes in the environment can hurt memory.

- Visual psychophysics: VR allows the precise presentation of binocular visual stimuli.

- Body representations and illusions: we can study body representations by modifying how one’s body is rendered. We can also create convincing representations of an arm that has been amputated or create full-body illusions. It’s even possible to change one’s body representation to that of an animal with a different body plan and study how representations evolve.

- Motor learning: thanks to precise tracking, we can study how complex motor skills are acquired and evolve over time.

- Rehabilitation and clinical research: stroke survivors can benefit from immersion in a virtual environment where their loss of function is not readily visible. Burn victims feel pain relief by immersing themselves in a cool environment. People with visual hemi-neglect can benefit from a functional evaluation in an ecologically relevant environment.

Consumer hardware

What’s really changed is the new opportunities brought by cheap consumer and prosumer headsets. I’m talking specifically about headsets with 6 degrees of freedom (6dof) tracking, that track both the orientation and the position of the hand, and are available for anywhere between 500 and 3000$ US. These headsets come in a variety of shapes and sizes, with some popular examples including:

- The Oculus Quest. This standalone headset doesn’t require a computer and can be carried in a cereal-box-sized carrying case with a total weight of less than 2 lbs. That makes it the most portable 6dof headset on the market right now.

- The Oculus Rift S and Windows MR headsets such as the Samsung Odyssey, which are tethered to a computer with a beefy GPU. These use inside-out tracking, which means there’s no extra equipment (i.e. lighthouses) to install. They can be still be somewhat mobile with a gaming laptop. Access to a beefy GPU, however, means development can be easier because we are not resource-constrained.

- The Valve Index, a higher end headset with controllers that don’t need to be constantly gripped, allowing a larger range of applications when looking a motor skill acquisition. This one requires lighthouses and therefore is not very portable.

- The HTC Vive Pro Eye. This brings eye tracking to the table, important for visual neuroscience applications. It also supports affordable foot representation and representing real objects in the virtual world with extra trackers.

Disclaimer: I worked for Oculus Research/FRL for two years, so I’m more familiar with Oculus products. Please mention it if I’ve missed an important piece of hardware.

These can be connected to many accessories that can potentially improve the range of opportunities, for instance treadmills, external tracking sensors for full body tracking, and devices that bring hand presence without controllers.

What does VR commodification mean for the lab?

Better tools

Many old reviews of VR in neuroscience state that the tools are hard to use. I dinstinctly remember sitting in an undergrad class well over ten years ago with Dr. Veronique Bohbot sharing how she struggled with Unreal tournament to implement an 8-arm maze.

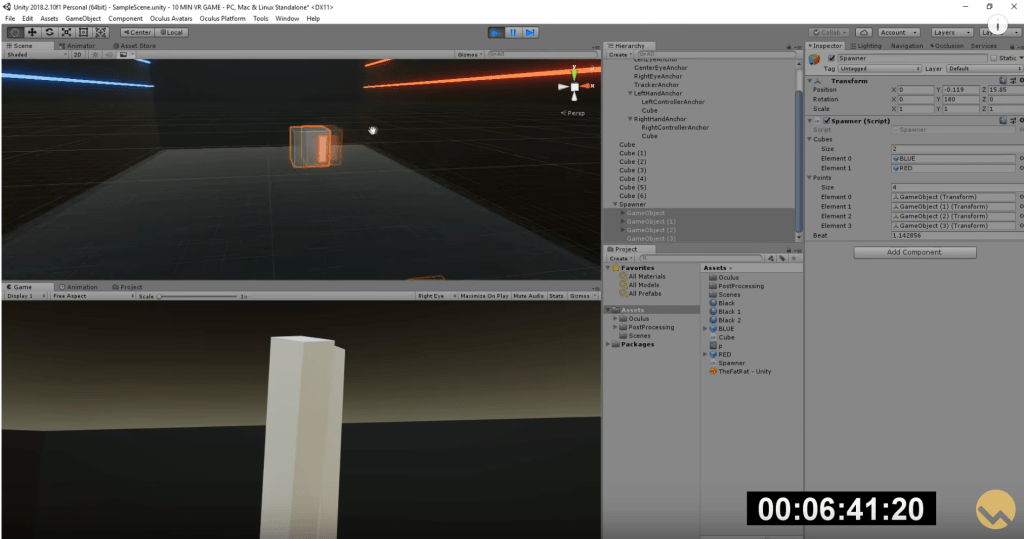

The tools have come a long way since then. To give you a concrete example of this, this tutorial shows Valem making a Beat Saber clone in VR in Unity3d in 10 minutes! Experiences can be developed in a GUI and immediately tested on a development PC. They’re accessible for someone with a programming background, and are often free for personal or academic use. The most commonly used tools are game engines with support for VR experiences:

- Unity, which is programmed in C# and is quite approachable for beginners. There are frameworks built on top of Unity, the Unity Experiment Framework and VREX to make it easier to create human behaviour experiments.

- Unreal Engine, which is scripted in C++. It tends to have a steeper learning curve but the rendering is top-notch, which can be important for immersion.

In addition, there are tools that are built especially for researchers. Vizard is a research platform that allows one to script experiences in Python. It has plugins for a lot of specialized research-grade hardware (extra trackers, data gloves). It also has comprehensive data management features built-in, e.g. replaying interactions, processing data in realtime with numpy/scipy, and streaming data out in realtime.

For more specialized visual psychophysics, PsychoPy and Psychtoolbox support the Oculus Rift, Rift S and the Quest via the Oculus Link. Rounding out the picture is WebVR and frameworks built on top of it like aframe and THREE.js which allow VR content to be authored in HTML and delivered via a web browser.

More content

Programmers and grad students don’t often have a background in 3d modeling. Nevertheless, complex environments and situations can be created with existing tools. The Unity store contains a wide range of free assets for download, from trees to grass textures to fully developed games. Google Poly showcases pre-built objects that can be readily imported into Unity.

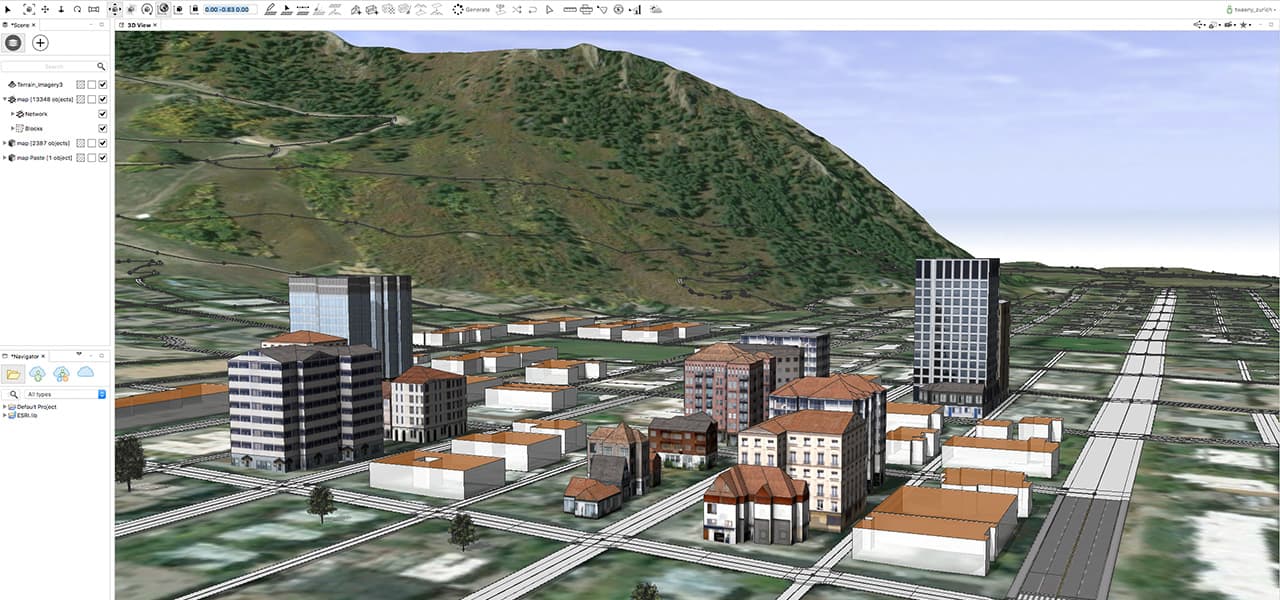

The content can be personalized to the subject to make it emotionally salient when that’s relevant. If you’re studying navigation, for example, you can recreate the neighborhood or even the whole city you’re in, complete with buildings and textures, with tools like CityEngine and CityGen3d.

Subjects themselves may have emotionally salient content that they can use, in particular 360 degree panoramas which can be taken with commodity mobile phones. I remember vividly the first time I viewed a panorama taken in Sequoia National Park three years prior – I felt transported. I cried. This is exactly the kind of stimuli you’d want if you’re studying the interaction of emotion, memory, and navigation, and it had been sitting in my Google Photos for 3 years.

3d scanning and 360 degree cameras also allow content to be created. There’s a big ecosystem of tools to create VR content so students and postdocs don’t have to become artists to make their experiments work.

Better apps

/cdn.vox-cdn.com/uploads/chorus_image/image/65779656/EKVBFK7WoAAuRaM.0.png)

VR has already had its first million seller with Beat Saber. Hundreds of available VR games and experiences can potentially be used fruitfully in neuroscience research.

The Courtois Neuromod project aims to record hundreds of hours of fMRI and MEG data from a dozen subjects. Subjects play 16-bit era games in a scanner, engaging a wide range of cognitive processes: visual and auditory sensory, planning, 2d navigation, reward, motor commands, etc. This turns the concept of gamification on its head: the game is the experiment. The subjects don’t fall asleep, and do they do actions which are ecologically relevant. VR games could add to the mix 3d navigation, locomotion, embodiment, tool use, and so forth.

One issue with applying this to VR game is that researchers will want to have access to the source code to hook into existing games and export useful information, e.g. position of the hands, head, and world. Many clones of popular games are available on the Unity Store, with their source, for a pittance. Researchers may also consider reaching out to game development studios to add features to their games to allow this exporting, or even to develop games made for research from the ground up, as the success of Sea Hero Quest shows.

At-home experiments

Installing VR has become much simpler with inside out tracking. That’s especially the case with the Oculus Quest, which doesn’t require a computer. It’s not impossible to think of lending a subject a headset for a multi-day task, for example to look at learning effects in visual or motor tasks.

We can think of it as a smaller scale version of crowdsourcing platforms such as Amazon Mechanical Turk. Here, however, the hardware is tightly controlled. This could be especially useful for people studying rehabilitation, e.g. in the case of stroke – the progression of rehabilitation can be tracked every day.

Conclusions

VR commodification means is that you don’t have to be a VR lab to do VR in your lab. Development is straightforward and the equipment is cheap, so it can be integrated as one component into a larger project. That offers many new opportunities for enterprising labs to learn more about the brain.